Think fast: what is the main feature of an iPhone today? The answer you will hear the most out there is camera. And it is not for nothing. Smartphones today are the cameras of the modern world – and, as the iPhone is one of the best-selling smartphones in the world, its camera is the most used around the globe.

Apple knows this and, with each new generation, tries to improve what is already spectacular today. This obsession with photography in a device that fits in the palm of our hand is paying off: as we saw here on the website, the iPhones 8/8 Plus cameras are currently the best on the market, according to tests conducted by DxO Labs – and the iPhone X has everything to overcome them, since it has optical stabilization in the two rear cameras and a telephoto lens with ƒ / 2.4 aperture (against ƒ / 2.8 of the 8 Plus).

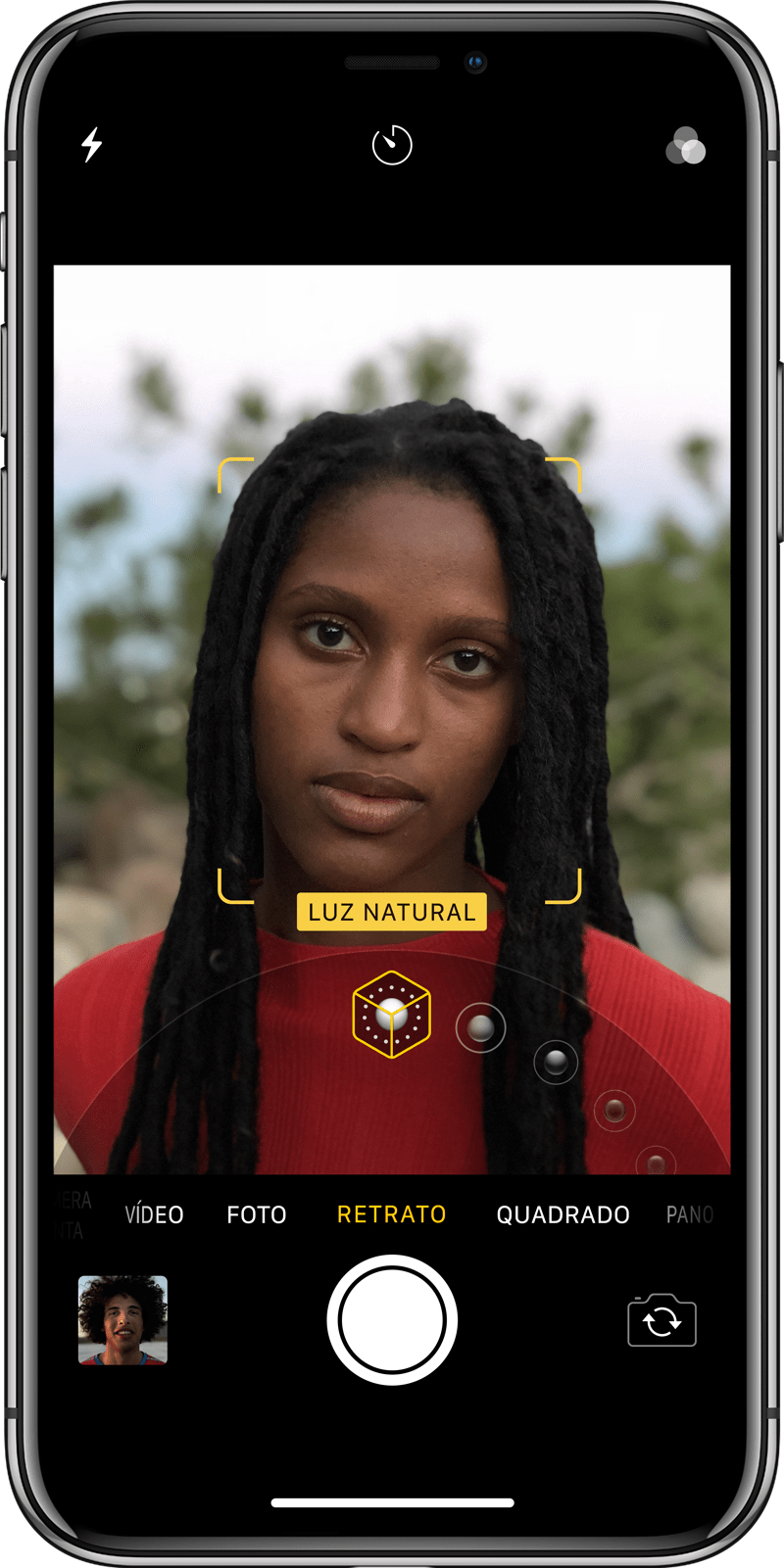

Leaving aside the improvements in hardware, this year the big news presented by Apple was the Portrait Lighting Mode. It is a feature that combines the depth sensor and facial mapping to enable the creation of studio-quality lighting effects, according to Apple. That is, it is not a simple filter that you apply on top of a photo you have taken or that you are going to take, it is Apple’s dual camera system working together with a strong machine learning in order to recognize a scene, mapping its depth to then change the lighting contours of the photographed subject. Everything is done in real time, and you can even view the result thanks to the A11 Bionic chip.

Taking this to an Apple scale, I couldn’t agree more with that statement by John Paczkowski, from BuzzFeed News, who talked to some people within Apple on the subject:

The result, when applied to the Apple scale, has the power to be transformative for modern photography, with millions of amateur clicks suddenly professionalized. In many ways, it is the most complete achievement of the democratization of high-quality images that the company has been working on since the iPhone 4.

I’m not saying that the photographer profession is over and that the iPhone alone solves anyone’s photographic problems. Of course, it is not. Put an iPhone 8 Plus in the hands of an ordinary user and another in the hands of a professional photographer who will quickly understand what I mean. But it is undeniable that, now, we no longer depend on equipment hitherto created specifically for professional photographers to be able to take incredible photos, with quality never before imagined for a device the size of a smartphone.

How did Apple achieve this? A lot of study and a deconstruction process of the artistic form that the company wants to imitate. In the case of the new feature of the iPhones 8 Plus and X, this meant studying how others (Richard Avedon, Annie Leibovitz and Vermeer) used lighting throughout history.

If you look at the Dutch masters and compare them to the paintings that were being made in Asia, stylistically, they are different. So, we ask why they are different? And what elements of these styles can we recreate with software?

We spent a lot of time lighting up people and moving them around, a lot of time. We had some engineers trying to understand the contours of a face and how we could apply the lighting through the software, and we had other silicon engineers working to make the process super-fast. We really did a lot.

–Johnnie Manzari, designer of the human interface team at Apple.

Phil Schiller, Apple’s worldwide marketing boss and enthusiastic photographer, explained a little more about Apple’s creative process and the strong collaboration between several teams:

There is the augmented reality team, saying, «Hey, we need to get more out of the camera because we want to make augmented reality a better experience and the camera plays a role in that.» And the team that is creating Face ID, they need camera technology, hardware, software, sensors and lenses to support biometric identification on the device. And therefore, there are many roles that the camera plays, either as a primary thing – taking a photo – or as a support, to help unlock your phone or enable an AR experience. And so, there is a great deal of work among all the teams and all these elements.

We are at a time when the biggest advances in camera technology are happening in both software and hardware. And this, of course, favors Apple’s virtues over traditional camera companies.

When asked about the evolution of the iPhone camera, Schiller acknowledged that the company has been working deliberately and incrementally, aiming for a professional caliber camera. Still, he said the idea is not just to create a better camera, but how Apple can contribute to photography.

Paczkowski questioned something interesting about the feature: does anything get in the way when we use software to simplify and automate a historically artistic process? After all, there is a bit of a dystopian feeling when pressing a button and essentially narrowing the huge gap that exists between professionals and amateurs.

For Manzari, it is not about simplifying or reducing intellectual content, but about accessibility, helping people to take advantage of their own creativity. Manzari’s point is that there are many good photographers who are not professionally trained and who do not need or want to deal with a series of lenses and tools to calibrate focus and depth of field when taking pictures. And, in fact, neither should. So, why not take all these things and give them something that can help you take great pictures?

For Schiller, the resource is not an attempt to imitate any specific style, but rather to try to paint the different ranges of styles that exist, so that there is something for everyone. The idea is not to make the “Stage Light” look like Vermeer, but to have a sufficient reach so that everyone has different choices for many situations that cover the main use cases. For that, Apple did have to learn how others used lighting throughout history and around the world.

The improvements in the cameras of the new devices do not stop there, obviously. Apple has been working on enhancements that are much less flashy than Portrait Mode and Portrait Lighting. Cameras on 8 Plus and X, for example, detect snow as a type of situation and automatically make adjustments to white balance, exposure and other information so that the user does not have to worry about anything. «It’s all perfect, the camera just does what it needs to do,» said Schiller. “The software knows how to take care of the photo for you. There are no settings. ”

We think the best way to build a camera is to ask simple, fundamental questions about photography. What does it mean to be a photographer? What does it mean to capture a memory? If you start out like this – and not with a long list of possible resources to incorporate – you often end up with something better. When you take the complexity out of how the camera works, the technology just disappears. So, people can apply all their creativity at the moment they are capturing. And you end up with some incredible photographs.

So it’s good.

via Daring Fireball